Estimated reading time: 12 minutes

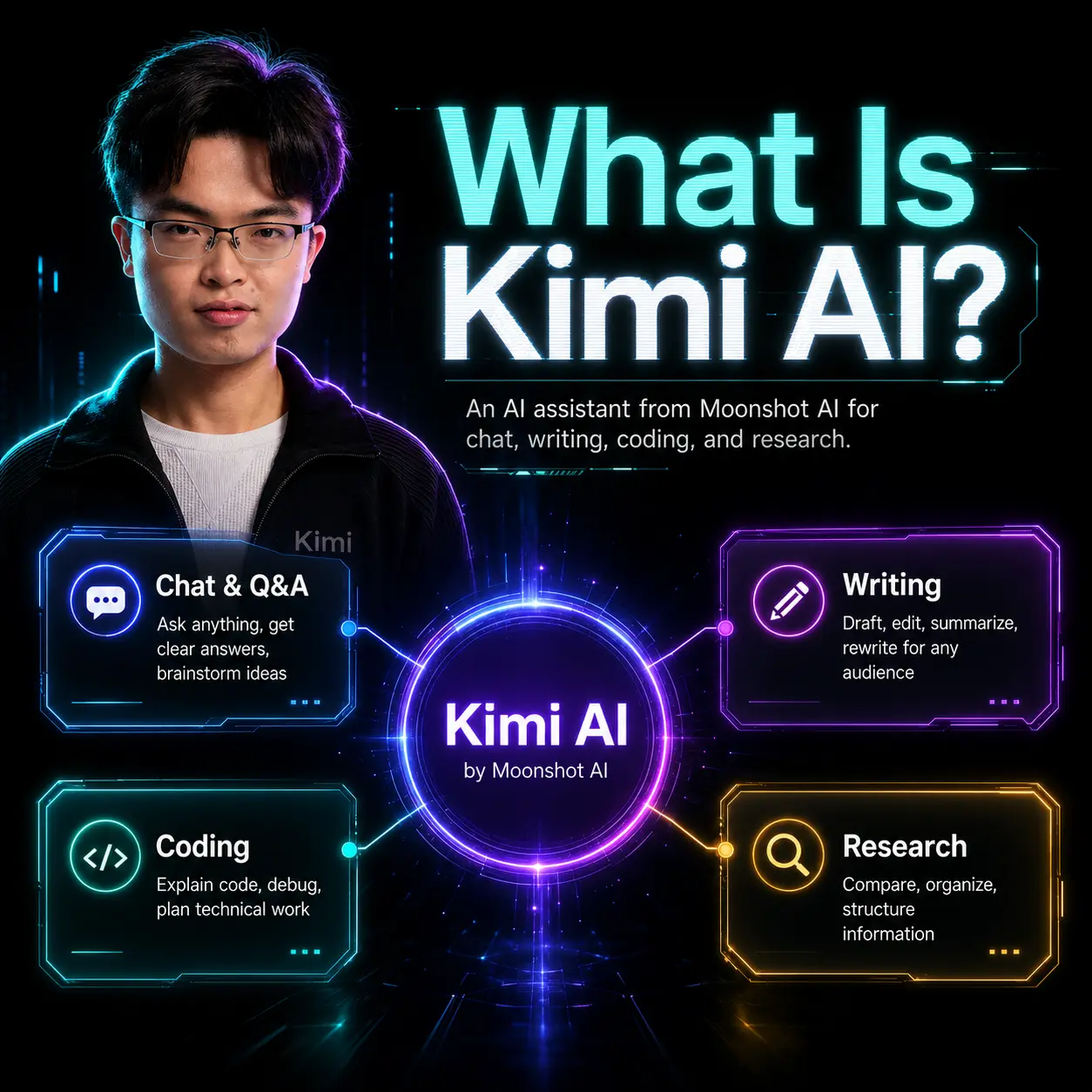

What is Kimi AI?

Kimi AI is an intelligent automation platform built to accelerate business decision-making, content workflows and customer engagement through generative and retrieval-augmented capabilities. It combines large language model interfaces with task orchestration, data connectors and fine-tuning tools to serve product, marketing and operations teams.

The product sits in the category of enterprise AI assistants and workflow automation platforms, marketed as a business-grade conversational intelligence layer that integrates with existing SaaS stack and data sources rather than as a consumer chatbot.

Kimi AI originated as a response to the need for actionable, context-aware assistance inside business systems: a way to turn organisational knowledge, CRM records and unstructured content into repeatable, automatable workflows that executives and frontline teams can use without engineering support.

At strategic level the platform’s value proposition is simple: reduce cycle time for insight-to-action by making analysis, content generation and routine decisioning reliable, auditable and repeatable across marketing, sales and operations. For executives, the appeal is measurable productivity uplift, lower operational friction and faster route-to-market for campaigns and product launches.

Key insights

- Kimi AI combines conversational LLM interfaces with connectors to enterprise data, enabling retrieval-augmented responses that reference live business context.

- The platform targets business outcomes—content throughput, customer response automation, and decision support—rather than only conversational novelty.

- Kimi AI supports customisation and governance features required for enterprise deployment: role controls, audit logs and model tuning pipelines.

- Deployment options often include cloud-hosted SaaS and private-instance configurations to manage data residency and compliance.

- Adoption typically follows pilot-to-scale patterns: small cross-functional pilots, followed by focused integration into marketing automation or CRM workflows.

Business Problems It Solves

Kimi AI addresses predictable operational bottlenecks created by information siloes, slow content production and manual customer responses.

- Content bottlenecks: accelerates copywriting, multi-channel repurposing and A/B variant generation to compress campaign timelines.

- Customer response overhead: automates first-line responses and routing while escalating novel issues to humans, preserving SLA compliance.

- Knowledge retrieval: converts unstructured repositories into queryable knowledge that reduces time to insight for sales and product teams.

- Decision support: synthesises multi-source inputs to produce concise executive summaries or recommended actions for portfolio and pricing decisions.

Kimi AI Features

This section maps platform capabilities directly to business outcomes CEOs, Founders and CMOs care about: speed, repeatability, compliance and scale.

Retrieval-Augmented Generation (RAG)

Business Value: RAG ensures outputs are grounded in a company’s own documents, CRM entries and product data, reducing hallucination risk and enabling auditable recommendations. For marketing and product teams this means faster, evidence-backed creative briefs and campaign rationales that reference source material.

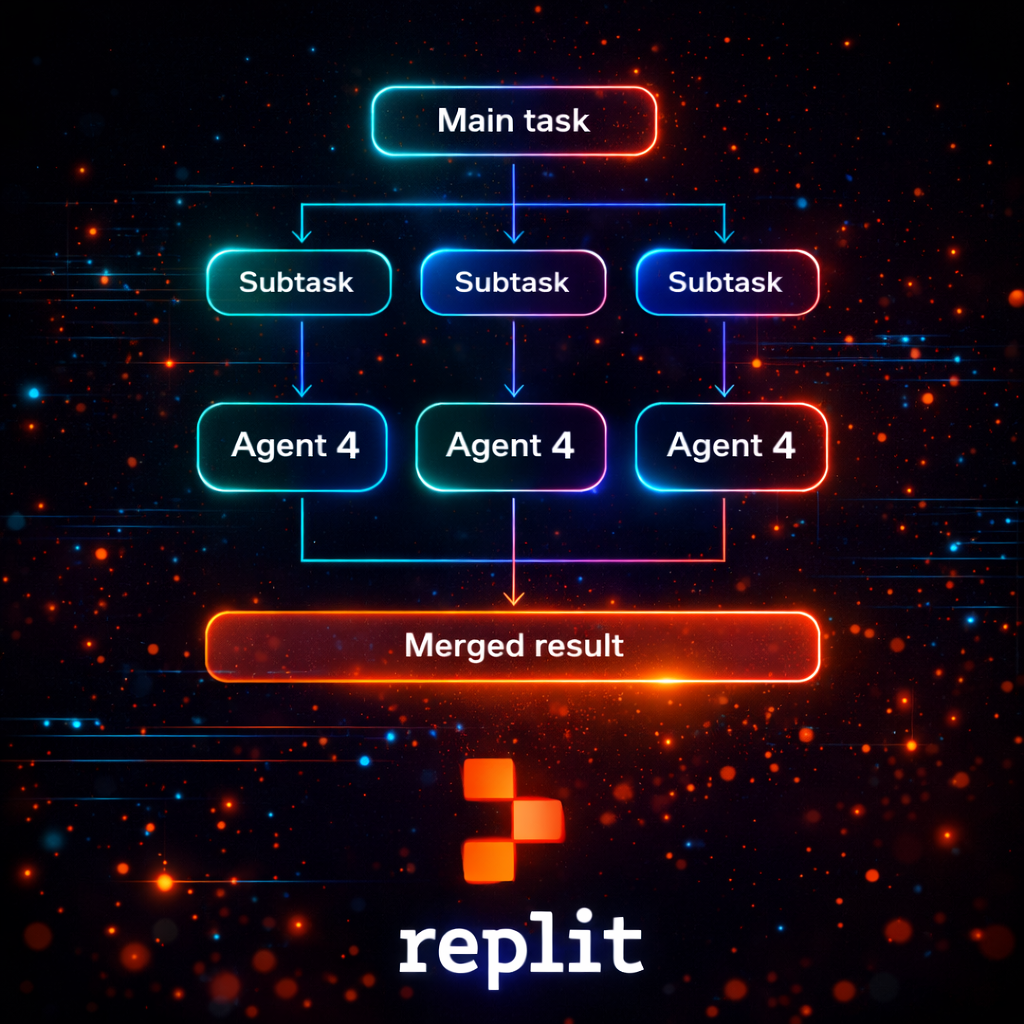

Workflow Orchestration

Business Value: Built-in orchestration converts one-off prompts into repeatable processes—content templates, review gates and approval handoffs—so that teams can scale campaigns without linear increases in headcount or coordination overhead.

Integrations and Connectors

Business Value: Native connectors to CRM, analytics and content repositories allow the platform to act on real-time signals (lead scores, churn indicators) and automate targeted actions—improving conversion rates and reducing lead response time.

Model Tuning and Customisation

Business Value: Fine-tuning and instruction-tuning permit organisations to infuse brand voice, vertical knowledge and regulated phrasing into outputs, delivering consistent external communications that comply with legal or sector-specific constraints.

Auditability and Governance

Business Value: Logs, decision trails and role-based controls enable compliance teams and legal counsel to trace how recommendations were generated, supporting regulated deployments in finance, healthcare or public sector settings.

Assistant Templates and Agents

Business Value: Pre-built agent templates for common functions—customer support triage, sales playbooks, PR responses—cut implementation time and provide immediate ROI by replicating best-practice workflows.

Multimodal Inputs

Business Value: Where supported, multimodal ingestion of documents, screenshots or meeting notes reduces friction for knowledge capture and accelerates briefing cycles for product and marketing teams.

Main Strategic Use Cases

Kimi AI has been positioned to support strategic levers where speed and consistency materially affect business outcomes.

- Go-to-market acceleration: automate persona-based content variants and sales enablement assets to reduce campaign lead time.

- Customer experience optimisation: implement AI-assisted routing and knowledge-based responses to improve NPS and lower support costs.

- Product management: summarise feature feedback and prioritise backlogs using signal-weighted synthesis from user research.

- Competitive intelligence: monitor public content and internal sales notes to produce concise competitor threat assessments.

Ready to improve your marketing with AI?

Let’s discuss how AI workflows and agents can save hours every week, lower acquisition costs, and upgrade the quality of your marketing execution.

Business Operations Use Cases

Operational deployments focus on repeatability, measurable KPIs and integration into existing SLAs and systems.

- Sales enablement: automated playbooks triggered by opportunity stage changes to guide reps with rebuttals, competitive points and content links.

- Support automation: first-response automation with escalation triggers and sentiment-aware routing to reduce mean time to resolution.

- Compliance workflows: templated document drafting with embedded policy checks to speed contract and disclosure processes.

- HR and internal comms: automated summarisation of town-hall notes and policy updates to reduce decision friction across distributed teams.

Alternatives and Competitor Tools

Market alternatives span specialist agents, large LLM vendors and workflow platforms that integrate generative capabilities.

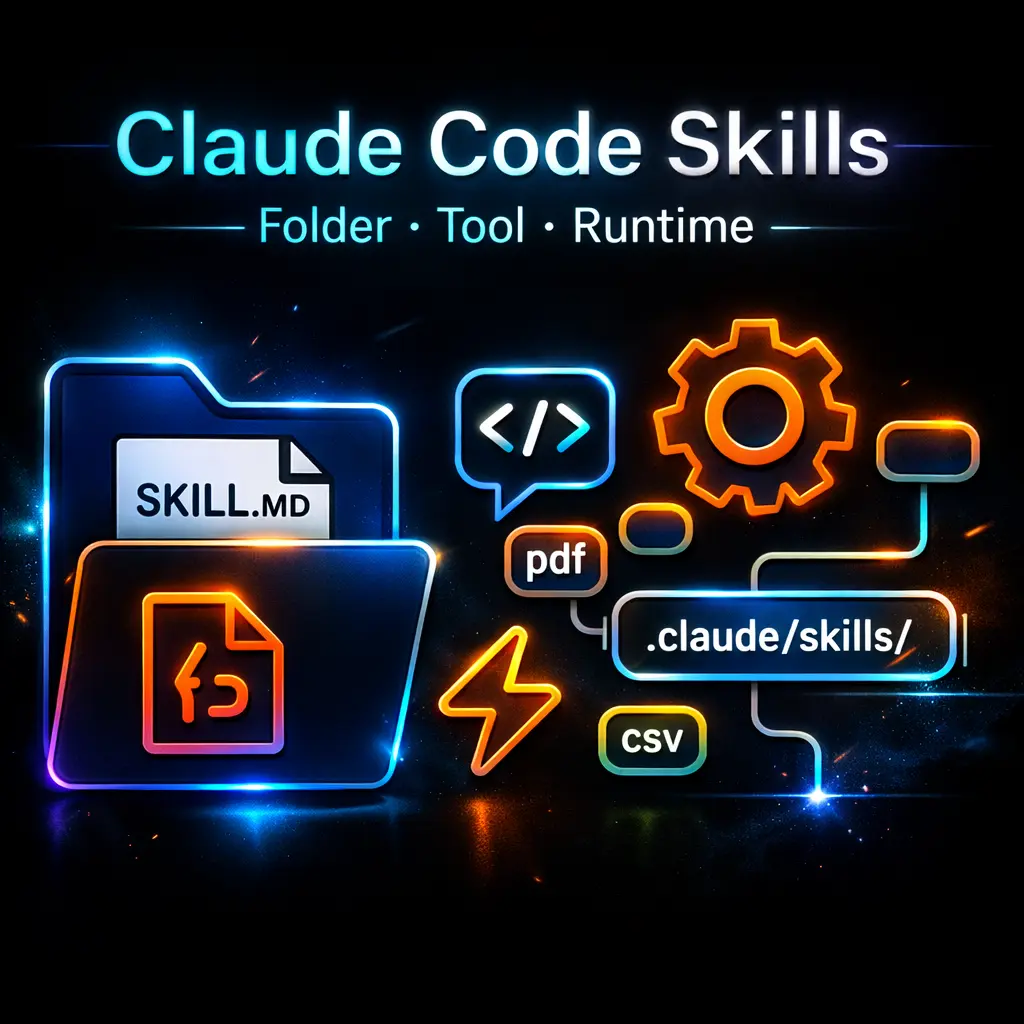

Anthropic Claude

Claude is positioned as an assistant-first LLM with safety-focused training and features for conversational workflows, often used for aligned, low-hallucination assistant tasks. Compared with Kimi AI, Claude emphasises model alignment and conversational safety while Kimi AI typically packages integrations and workflow orchestration around the assistant layer.

OpenAI (ChatGPT + Plugins)

OpenAI provides powerful generative models and a rich plugin ecosystem that enables integrations with external data. The strategic difference is that OpenAI focuses on model capability and extensible plugin connectivity, whereas Kimi AI focuses on pre-configured business workflows and governance for enterprise use.

Specialist Automation Platforms

Tools such as RPA vendors and low-code automation platforms provide robust process automation but lack native, enterprise-grade generative intelligence. Businesses that need heavy structured-process automation with limited generative output might prefer these platforms over a generative-first approach.

For businesses that prioritise end-to-end workflow automation and governance around generative outputs, Kimi AI is the better fit; for teams that need raw model capability or an assistant with a safety-first research approach, options like Claude or OpenAI plugins may be preferable.

Comparison: Kimi AI vs Claude

This comparison highlights where strategic decision-makers should weigh trade-offs between a workflow-centric platform and a model-centric assistant.

When to use an assistant that prioritises operational integrations and governance, and when to favour a model designed around conversational alignment and safety.

For teams evaluating assistant behaviour within complex enterprise workflows, the orchestration and audit capabilities of a platform like Kimi AI can outweigh marginal model improvements.

For research on Anthropic’s agentic workspace and to understand alternative assistant design tools, executives can consider how agent behaviour differs in real deployments by reviewing materials on 🔗 Claude Cowork and the broader design tooling in the Claude ecosystem using 🔗 Claude AI Design Tool.

| Category | Kimi AI | Claude (Anthropic) |

|---|---|---|

| Primary focus | Workflow orchestration and enterprise integrations for repeatable business processes | Model alignment and conversational safety for assistant interactions |

| Integration depth | Native connectors to CRM, analytics and content repositories; enterprise-grade audit trails | API and plugin model; strong conversational API but fewer pre-built enterprise workflows |

| Governance & compliance | Role-based controls, logging and private deployment options tailored for enterprise | Safety-focused training and guardrails; enterprise features vary by deployment |

| Customisation | Fine-tuning, instruction templates and tailored agent flows for business processes | Prompting and safety tuning; extensions via plugins for data access |

| Best fit | Teams needing repeatable, auditable actions across sales, marketing and support | Organisations prioritising conversational alignment and research-backed safety |

| Speed to value | Pilot-to-production templates reduce implementation time for business workflows | Rapid prototyping for conversational assistants but additional integration work required |

Benefits & Risks

Adopting generative business assistants presents measurable benefits and known risks that require governance.

- Benefits: faster content cycles, lower response times, standardised decision support, and improved knowledge reuse across teams.

- Risks: model hallucination, data leakage, biased outputs and overreliance on automated decisions without human oversight.

- Mitigations: enforce human-in-the-loop for high-risk decisions, apply retrieval-augmentation, maintain training data provenance and apply role-based controls.

Executive Summary

For executives evaluating where to invest in AI-driven productivity, Kimi AI should be considered a workflow-first enterprise assistant that trades raw model novelty for operational reliability, auditability and faster route-to-deployment inside business systems. If you operate in regulated industries or depend on repeatable customer and go-to-market processes, the platform’s orchestration and governance capabilities yield clearer ROI than a standalone conversational model.

Decision-helper: choose Kimi AI when your primary need is scaleable, auditable automation embedded into CRM and marketing stacks; choose model-first alternatives if your core requirement is exploratory conversational capability or advanced model experimentation.

Misconceptions and Myths

Mistake: AI will replace subject-matter experts overnight.

Correction: AI platforms amplify expert productivity but do not replace domain expertise. Best deployments embed human oversight and use AI to reduce routine work rather than supplant judgement.

Mistake: All LLMs are interchangeable.

Correction: Models differ in training data, alignment priorities and API integrations; choice should be based on fit for workflow, governance and data residency requirements.

Mistake: Generative outputs are automatically compliant.

Correction: Outputs must be validated against legal and regulatory requirements; compliance requires templates, policy checks and audit logs to be effective.

Mistake: Faster content generation means better performance.

Correction: Speed reduces time-to-market, but effectiveness depends on targeting, measurement and iterative optimisation—quality controls remain essential.

Mistake: On-premise equals secure by default.

Correction: Private hosting reduces some risks but security depends on configuration, patching, and identity management; cloud-hosted offerings can also be secured to high standards.

Key Definitions

Retrieval-Augmented Generation (RAG)

A method that combines search over a document corpus with a generative model so responses are grounded in retrieved evidence rather than relying solely on model memory.

Large Language Model (LLM)

A neural network trained on large corpora of text capable of generating and understanding human-like language; used as the core generative engine in conversational AI.

Human-in-the-loop (HITL)

An operational pattern where humans review, correct or approve AI outputs as part of a controlled workflow to manage risk and improve accuracy.

Orchestration

The automation of multi-step processes—triggering actions, approvals and integrations—so that complex workflows run reliably with minimal manual intervention.

Model Alignment

Training and fine-tuning practices designed to make model outputs conform to safety, ethical and brand guidelines relevant to the deploying organisation.

Frequently Asked Questions

How does Kimi AI connect to my CRM and data sources?

Most deployments use native connectors or secure APIs to map CRM fields, content repositories and analytics platforms into the assistant’s knowledge layer. Integration patterns are typically configurable to control which data is available for retrieval and to enforce role-based access.

What governance features are necessary for enterprise use?

Essential controls include audit logs, role-based permissions, data provenance tracking and the ability to enforce human approval for high-risk outputs. For regulated sectors, data residency and model tuning records are also critical.

When to use Kimi AI rather than a raw model API?

Use Kimi AI when you require integrated workflows, repeatability, audit trails and connectors that convert AI outputs into automated business actions. If you only need an experimental conversational model, raw APIs may be sufficient.

If you operate in Ukraine, are there localisation considerations?

For businesses in Ukraine, check for local language support, latency and localised customer service channels. Ensure deployments align with Ukrainian data protection rules and consider hybrid or private-instance deployment to meet data residency needs.

How do you manage hallucinations and factual errors?

Apply retrieval-augmentation, prompt engineering and human-in-the-loop validation for outputs used in customer-facing or regulatory contexts. Regularly monitor error patterns and retrain or tune models with corrected data.

What is the typical implementation timeline?

Pilots that prove business value can run in 4–8 weeks, with broader roll-out phases taking 3–6 months depending on integration complexity, compliance requirements and change management readiness.

For businesses that need high security, what deployment options exist?

Secure options include private cloud instances, virtual private cloud deployments and on-premise installations combined with strict IAM (identity and access management) and encryption protocols to meet enterprise security standards.

How should a measure ROI for an AI assistant?

Track metrics such as content throughput, campaign velocity, lead response time, customer satisfaction (NPS) and cost per support ticket. Combine efficiency KPIs with outcome metrics to demonstrate strategic impact on revenue and retention.